Running workloads on Kubernetes gives you power and flexibility — but it also means your application’s availability depends on the health of every single pod in your cluster.

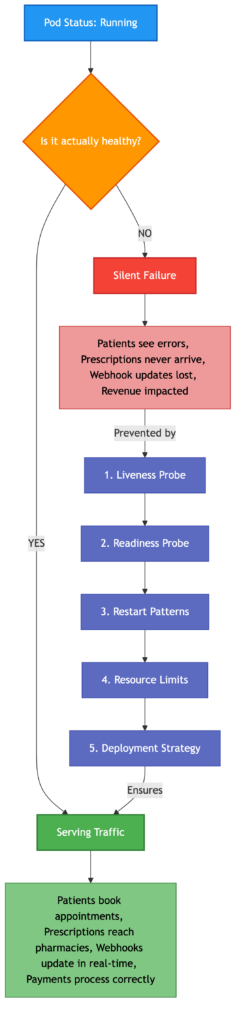

A pod can show “Running” status and still be completely broken. Database connections drop, memory leaks creep in, dependencies timeout silently. Your users are getting errors while your dashboard shows green.

As a Full-Stack Software Engineer working on a healthcare platform, I’ve found myself wearing the DevOps/SRE hat more often than expected. In many teams — especially lean ones — infrastructure reliability doesn’t sit neatly within one role. The engineer who builds the API endpoints, integrates third-party services, and writes the frontend is often the same person troubleshooting why a pod failed to start at 2am.

The platform I work on allows patients to book appointments with doctors, receive electronic prescriptions, and get their medication dispensed through integrated pharmacy networks. The system handles real-time webhook updates, payment processing, patient notifications via WhatsApp and SMS, and video consultations.

In this context, an unhealthy pod isn’t just a technical issue — it’s a patient who can’t access their prescription, a pharmacy that never receives a dispensing request, or a doctor who loses connection mid-consultation. The stakes are different when healthcare is involved.

Here’s what I’ve learned matters most

Liveness probes : Don’t just check if the process is alive. Verify it can actually do its job. A running process that can’t serve requests is worse than a crash — at least a crash triggers a restart.

Readiness probes : Your pod might be alive but not ready. During deployments, cold starts, or dependency failures, traffic hitting an unready pod means failed requests for your users.

Pod restart patterns : A pod that restarts 5 times a day is telling you something. OOMKilled? CrashLoopBackOff? These are symptoms, not the disease. Investigate the root cause before it becomes a production incident.

Resource limits : Running close to memory or CPU limits is playing with fire. Kubernetes will evict your pod without warning. If you’re running single replicas, that eviction means downtime.

Deployment strategy matters : Not every cluster has the luxury of running multiple replicas. When resource constraints force you into a Recreate strategy instead of RollingUpdate, there’s a window where zero pods are serving traffic. If the new pod fails health checks, you’re fully down with no automatic fallback. Monitoring becomes your safety net.

The reality is simple: in a containerised environment, your infrastructure health IS your application health. No healthy pods, no service.

Whether you’re a dedicated SRE, a DevOps engineer, or a full-stack developer who inherited the infrastructure — if your team doesn’t have pod health visibility today, make it a priority this week. The cost of monitoring is minutes. The cost of an undetected failure is measured in lost patients and trust.

Comments are closed